Table of Contents

- 1. Introduction to AI Fluency

- 2. The AI Fluency Framework

- 3. Deep Dive 1: What is Generative AI? (Part 1)

- 4. Delegation

- 5. Description

- 6. Deep Dive 2: Effecting prompting techniques

- 7. Discernment

- 8. Diligence

- 9. Conclusion & certificate

- 10. Addendum: Things Copilot suggested to me while I edited this document

Anthropic AI Fluency: Framework & Foundations course https://www.anthropic.com/learn/claude-for-you

Ran through by R.W. Singh on 2026-04-19.

1. Introduction to AI Fluency

Key takeaways

- This course focuses on human-AI collaboration, not just understanding AI as a technology

- AI Fluency means engaging with AI systems effectively, efficiently, ethically, and safely

- The AI Fluency Framework centers on the "4D" competencies of Delegation, Description, Discernment and Diligence

- The goal is to develop lasting skills that remain relevant as AI technology evolves

- Effective AI collaboration requires both practical skills and a fundamental shift in how we think about working with AI

2. The AI Fluency Framework

2.1. Why do we need AI Fluency?

“How do we move beyond knowing a few prompt tricks to developing a thoughtful and responsible approach that will continue to serve us well as AI keeps evolving?

We discuss how AI Fluency involves developing practical skills, knowledge, insights, and values that help you interact with AI systems in ways that are effective, efficient, ethical, and safe. We also introduce three ways people engage with AI:

- Automation: The AI completes specific tasks based on your instructions.

- Augmentation: You and AI collaborate as creative thinking and task execution partners.

- Agency: You configure AI to work independently on your behalf, establishing its knowledge and behavior patterns rather than just giving it specific tasks.

“AI isn’t just a tool. It’s a technology that can act as a tool, but also as a medium or as a partner or co-creator, and sometimes all of these at once.”

I don’t trust a probabilistic bot to complete any sort of tasks for me, I don’t trust the output it gives, and I don’t want to use it as a soundboard to brainstorm with. That’s what the humans on Mastodon are for.

He says you could “power a dynamically interactive character in a game”, but why would I design a video game that could go off the rails, that I don’t have creative control over?

2.2. The 4D Framework

Key takeaways

- AI Fluency means engaging with AI in ways that are effective, efficient, ethical, and safe

- There are three primary ways we engage with AI: (Automation/Augmentation/Agency)

- The AI Fluency Framework consists of four core competencies (the 4Ds):

- Delegation: Deciding what work to do with AI vs. yourself

- Description: Communicating effectively with AI systems

- Discernment: Evaluating AI outputs critically

- Diligence: Ensuring responsible AI collaboration

- These competencies apply across all three ways of working with AI

- Developing these competencies prepares you for evolving AI capabilities

Figure 1: Understand your goal and the problem that you are trying to solve. Know what AI systems can and can't do well. Decide how to divide the work between you and the AI.

Presentation of text summary beneath the videos feel very surface-level and repetitive. Probably AI generated. Not worth much value.

The videos feel more like marketing videos rather than actual educational videos.

“Think about a research project you’re working on. You might decide to have your AI assistant review lengthy documents and data, and then engage in thoughtful discussion about the implications and findings, but reserve the critical analysis and final conclusions for yourself.”

I would not trust that I would glean all of the information, or the correct information, were I to delegate the looking at “documents and data” to an AI bot. It would be best for me to invest the time to do that work myself, keeping notes and synthesizing my own products.

Figure 2: What you want the final output to be. How yuo want the AI to approach the task. How you want the AI to behave.

I don’t want to verbally program a bot in English every time I need a task done.

Figure 3: Is the output useful and correct? Is the AI taking the right approach? Is the AI behaving as desired?

Why would I rely on SOFTWARE that gives INCORRECT and UNUSEFUL results, to do things like “look through data for my research project”? How does this seem like a useful tool?

“Most of our interactions with AI involve small loops of description and discernment: describing what we need, evaluating what we get, refining our request, and so on.”

Or… I could just use my brain. I could generate ideas in my brain. I could create drafts, and then revise those drafts.

Figure 4: Ensuring accuracy and taking responsibility. Honesty and transparency. Ethical use and critical awareness.

“When making important decisions with AI assistance, how are you verifying the accuracy of the information presented to you?”

Or you could find the accurate information and synthesize from there instead of working “backwards” like this.

“Have you considered how to be transparent about the involvement of AI?”

Have you considered? Did you CONSIDER IT? Is the CONSIDERING the ethical step? What is the ethical result? Or do we just CONSIDER it?

“Are you willing to be accountable for the AI assisted work you have done?”

No, I am not.

3. Deep Dive 1: What is Generative AI? (Part 1)

3.1. Generative AI fundamentals

“Generative AI refers to artificial intelligence systems that can create new content rather than just analyzing existing data.”

But to generate """NEW DATA""" it must be trained on existing data; an LLM cannot generate """NEW DATA""" without a training data set.

“They’re called language models because they’re trained to predict and generate human language, and large because they contain billions of parameters - mathematical values that determine how the model processes information, somewhat like synaptic connections in your brain.”

These parameters are probabilistic WEIGHTS between TOKENS along many dimensions. It is not “processing information” like a brain.

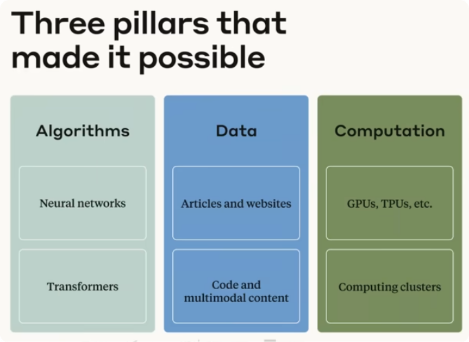

Figure 5: Three pillars that made it possible. Algorithms - Neural networks and Transformers; Data - Articles and websites, and Code and multimodal content; Computation - GPUs, TPUs, etc, and Computing clusters

Figure 6: Scaling laws - Compute and data, and Model intelligence

“The combination of these three factors led to an important discovery known as the Scaling Laws. These empirical findings showed that as models grew larger and trained on more data with more computing power, their performance improved in predictable ways. More surprisingly, researchers found that entirely new capabilities began to emerge as these models grew larger. Abilities no one explicitly programmed, like reasoning through problems step-by-step or adapting to new tasks with minimal instruction.”

Did it, though? Such magical thinking.

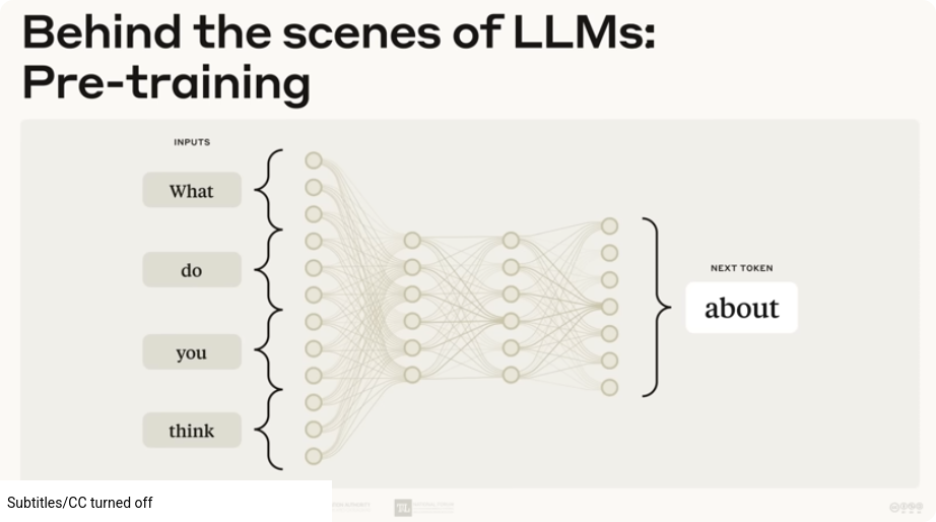

Figure 7: Behind the scenes of LLMs: Pre-training; a diagram of inputs ("What do you think"), a series of interconnected nodes, and then the output "Next token: About".

“During initial training, also called pre-training, LLMs like Claude analyze patterns across billions of text examples. Imagine reading every website and piece of text you can find, not just to absorb information, but to understand the statistical relationships between words, phrases, and concepts.”

The latter is the ONLY thing this training does – the statistical relationships between tokens – The training does NOT make the AI read every website and piece of text “to absorb information”.

“At this stage, the model essentially builds something like a complex map of language and knowledge. This pre-training process involves showing the model text and asking it to predict what comes next. Through many iterations, the model gradually refines its predictions, learning the patterns that make language coherent and meaningful.”

It is trained on what grammatically correct looking text looks like, not what any of it means.

“After pre-training, models undergo additional training called fine-tuning, where they learn to follow instructions, provide helpful responses, and importantly, avoid generating harmful content. This often involves human feedback to improve the model’s performance, as well as reinforcement learning, which uses rewards and penalties to shape the model’s behavior toward being more helpful, honest, and harmless, in the case of Anthropic’s models.”

In order to do this we employ (and underpay) people in the “global south” to review and rate content generated by the AI, exposing these people to terrible content to have them manually mark it as harmful so that “we” don’t have to see it.

Figure 8: Anthropic goals of fine-tuning: Helpful, Honest, Harmless

IT IS NOT HARMLESS. NONE OF THIS IS HARMLESS.

“Once models are trained, they are then deployed for you to interact with. When you interact with Claude […] you’re providing a prompt, which is text that the model reads and then continues from based on pattenrs it learned during training. The model isn’t retrieving pre-written answers from a database. Instead, it’s generating new text that statistically follows from what you’ve written.”

THAT IS WHAT IT DOES.

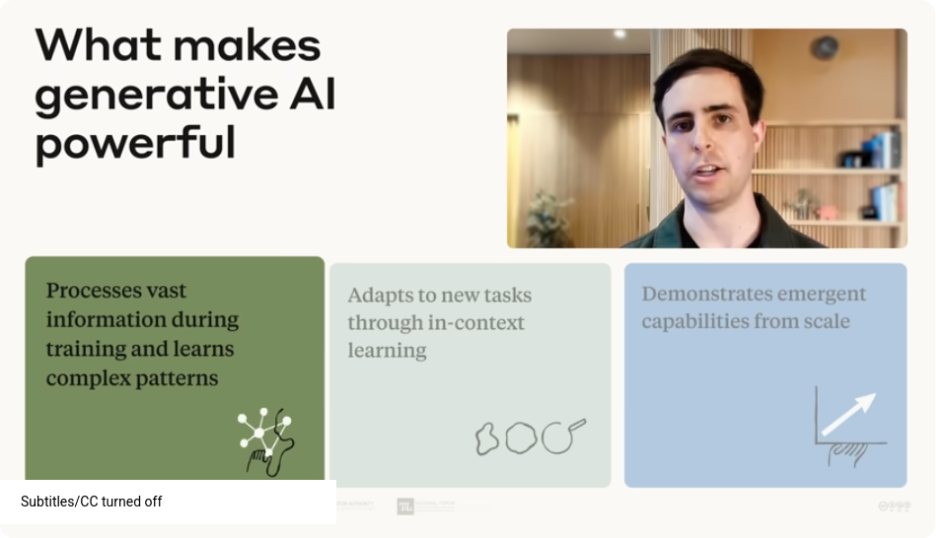

Figure 9: What makes generative AI powerful: Processes vast information during training and learns complex patterns. Adapts to new tasks through in-context learning. Demonstrates emergent capabilities from scale.

“As these models grow larger, they develop abilities that weren’t explicitly designed into them, sometimes surprising even their creators.”

I cannot believe that these people believe that their statistical word gathering model is somehow developing emergent behaviors via this method, and they speak as if the model has any kind of actual “intelligence”. How do you train intelligence on just words? There is no context for the real world, for physical sensation. There is no thinking in simply crunching tons of human language.

3.2. Capabilities & limitations

Key takeaways

- .Generative AI creates new content (text, images, code) rather than just analyzing existing data

- .Modern systems like LLMs were made possible by three key developments:

- .Algorithmic and architectural breakthroughs (especially the transformer architecture)

- .Vast amounts of digital training data

- .Dramatic increases in computational power

- .Generative AI learns through two stages: pre-training (analyzing patterns across billions of examples) and fine-tuning (learning to follow instructions and provide helpful responses)

- .Current capabilities include versatility across tasks, conversational awareness, and the ability to connect with external tools

- .Current limitations include knowledge cutoff dates, potential for hallucinations, context window constraints, and challenges with complex reasoning

- .The most effective applications combine human and AI strengths, with humans providing critical thinking, judgment, creativity, and ethical oversight

“You might be amazed at how versatile modern language models can be. They’re skilled with language in ways that seemed impossible just a few years ago. Crafting emails that capture your voice, condensing lengthy reports into clear summaries, translating between languages, and explaining complex topics across countless fields from microbiology to marketing strategy.”

- 404 Media: Wikipedia Bans AI-Generated Content

- 404 Media: AI Translations Are Adding ‘Hallucinations’ to Wikipedia Articles

“What’s particularly notable is how these models can shift between different tasks without needing additional training. The very same system that helps you write poetry or brainstorm ideas for your birthday party can turn around and help you understand quantum computing concepts or analyze quarterly business trends, all through simple conversation.”

I don’t want its help to write poetry, I don’t want its help to brainstorm birthday party ideas, and I don’t trust it to give me correct information about quantum computing (and I am not knowledgeable enough to VERIFY THAT THE INFORMATION IS CORRECT – wasn't one of the LITERACY ITEMS from previously that we assess its output for usefulness and correctness?), and I do not trust it to analyze quarterly business trends or crunch any kind of data because it is going to skip over some things and it is going to generate (“hallucinate”) things that were not there.

“AI models are bounded by their training data. LLMs have a knowledge cutoff date based on when they were trained, the point after which they have no innate knowledge of the world.”

GEE SURE SOUNDS LIKE THIS WOULD BE HANDY FOR ANALYZING QUARTERLY BUSINESS TRENDS WHEN IT CANNOT COMPARE YOUR BUSINESS’ DATA TO CURRENT NEWS AND TRENDING CULTURE.

“The training process doesn’t verify every fact in the training data. This means models can sometimes learn and reproduce inaccuracies that were present in their training data.”

Besides the human-driven verification process in training (if they even know if something is inaccurate, and hopefully don’t miss something), I wouldn’t even claim that “many” or “most” facts are verified. Again, it’s scraping large amounts of text and generating a statistical probability of these words being together.

For example, if there is a lot of posts on the internet about “VACCINES CAUSE AUTISM!” then it will have a statistical bias towards words that generate in this format. There is no “fact checking” going on. And if some misinformation has a large foothold on the Internet (where most of the training data comes from), its representation in the model’s output will be significant.

“They can also make mistakes when trying to piece together information they’ve learned.”

We shouldn’t be using anthropomorphizing language like “learned” for models.

“This leads to what is often called a hallucination. AI confidently stating something that sounds plausible but is actually incorrect. Unlike search engines that simply retrieve existing documents, LLMs generate responses based on statistical patterns, sometimes producing hallucinations.”

Is this one of your great fantastic emergent features that wasn’t explicitly trained in? (I mean no it wasn’t explicitly added to models as a feature but THE ENTIRE WAY THAT LLMs ARE TRAINED AND WORK MEANS THAT HALLUCINATIONS ARE INEVITABLE, A CORE FEATURE.)

“Imagine a friend who tells a story with absolute confidence only to have the details completely wrong. AI can sometimes be like that.”

I don’t want to rely on a tool that is “sometimes like that”.

“Another important constraint is the context window we mentioned earlier. As a reminder, that’s the amount of information an AI can process at one time. Every LLM has a maximum limit to how much information it can consider during a single interaction. If this limit is exceeded, the AI won’t be able to remember that falls outside the window, usually on a first in first out basis. […] This can limit its ability to process large documents or remember the entire conversation.”

Guess you had better pay for the best plan so you don’t get the AI with ADHD.

“Furthermore, unlike traditional software that produces identical outputs given the same inputs, LLMs are somewhat unpredictable by default – also known as non-deterministic.”

WHY WOULD I WANT TO RELY ON SOMETHING THAT IS NOT PREDICTABLE? Best practices in software for DECADES has spent time on AVOIDING UNDEFINED BEHAVIOR. Why would we want to entrust any important information or processes to something unpredictable?

“This variability stems from the nature of how these models generate text. They’re making probabilistic decisions about what text should come next based on patterns in their training data and certain settings that developers can tweak. This creative variability can be great for brainstorming and generating diverse ideas, but requires awareness when consistency or accuracy are critical.”

BUT HERE, TEACH ME QUANTUM PHYSICS IN A SIMPLE CONVERSATION.

“While these models are improving rapidly, they’ve historically shown limitations with complex reasoning tasks. Particularly with mathematical or logical problems requiring multiple steps.”

Because it’s trained on text patterns it has seen before, and we don’t just post tons and tons of math equations and solutions online. It isn’t doing the computations, it’s looking for how many times it’s seen “2+2=4” online and in books.

“The good news is that newer reasoning or extended thinking models specifically designed to think step-by-step are showing strong progress in these areas.”

Oh good, “strong progress”. Coming soon.

“While models like Claude can now access external tools, they may still lack access to specific data sources or specialized tools that would be needed for certain tasks. It’s like having a brilliant colleague who can’t access your company’s internal database. Their ability to help will be limited no matter how smart they are. If a model doesn’t have access to a piece of data or tool that is needed to answer a question, then it should not come as a surprise that it won’t be able to help answer the question.”

So let us tap into your company’s data. Tee-hee.

“Researchers are working to address current limitations through techniques like retrieval augmented generation which connects models to external knowledge and data sources as well as expanding their ability to use tools and improving their reasoning capabilities.”

What isn’t being stated here for the layperson is that “using tools” probably means spinning up a program (a traditional piece of software or script) to do some operation and then return the result. You could also run a program, do some operation, and check the result yourself.

4. Delegation

4.1. A closer look at Delegation

Key takeaways

- .Delegation is about making thoughtful decisions about what work to do yourself, what to do together with AI, or what to let AI handle independently, and how to distribute those tasks.

- .Problem Awareness means clearly understanding your goals and the nature of the work before involving AI.

- .Platform Awareness involves understanding the capabilities and limitations of different AI systems.

- .Task Delegation is the process of thoughtfully distributing work between humans and AI to leverage the strengths of each.

- .Effective delegation requires both domain expertise and an understanding of AI capabilities.

- .The goal isn't to automate everything, but to create the most effective human-AI partnership for any given task or goal.

Figure 10: Understanding the problem you're trying to solve. Understanding the capabilities of available AI tools. Breaking down complex work into smaller parts. Making strategic decisions about who does what. Choosing hte right mode of interaction.

Figure 11: Problem Awareness - THe ability to clearly define your goals and understand what work is needed before involving AI tools. What does "success" look like? What kind of thinking and work is needed to get there? AI fluency begins with and depends on your expertise.

Figure 12: Platform Awareness - A working knowledge of available AI systems and their specific capabilities and limitations. Understanding the unique strengths and limitations of available options. Which models perform best for the work you have in mind? Which systemse prioritize speed, or creativity, or depth, or accuracy?

Figure 13: Task Delegation - The strategic process of dividing work between humans and AI. What could be usefully automated? Where would augmentation create more value than working separately? What should be done by a human alone? What could be done by an agent on your behalf?

“The landscape moves quickly. The best approach is hands-on. Experiment with different AI systems as often as you can and develop your own insights based on personal experience.”

Who needs an expert to figure things out and report on it? Instead, how about you pay for a bunch of products – as often as you can! - so that we can blame you on “not having enough personal experience or insights” if the products don’t work properly. Clearly you didn’t try out enough products.

“Ask yourself: Which specific parts of your workflow would benefit from automation? Or where would an augmentation approach create more value than either working alone or fully automating?”

Please do the mental work of coming up with reasons for YOU to use OUR product. We’re not going to actually present a product and its uses, we will just vaguely suggest things you could do with it – like plan your birthday party.

“The landscape moves quickly. The best approach is hands-on. Experiment with different AI systems as often as you can and develop your own insights based on personal experience.”

Who needs an expert to figure things out and report on it? Instead, how about you pay for a bunch of products – as often as you can! - so that we can blame you on “not having enough personal experience or insights” if the products don’t work properly. Clearly you didn’t try out enough products.

“Ask yourself: Which specific parts of your workflow would benefit from automation? Or where would an augmentation approach create more value than either working alone or fully automating?”

Please do the mental work of coming up with reasons for YOU to use OUR product. We’re not going to actually present a product and its uses, we will just vaguely suggest things you could do with it – like plan your birthday party.

5. Description

Key takeaways

- Description is about communicating with AI in ways that create a productive collaborative environment

- Product Description involves clearly defining what you want in terms of outputs, format, audience, and style

- Process Description guides how the AI approaches your request, which can be as important as specifying the end goal

- Performance Description defines behavioral aspects like whether the AI should be concise or detailed, challenging or supportive

- AI systems are interactive partners, not databases or vending machines

- Clear communication up front saves time and leads to better results

Figure 14: Description - Don't just craft prompts. Explain tasks, ask questions, provide context, and guide the interaction. Build a shared thinking environment where both you and the AI can each do your best work.

Figure 15: Product Description - The ability to clearly define the characteristics of your desired output. Clearly describe what you want. Context, format, audience, style, and other constraints. Give the AI the information it needs to deliver what you're actually looking for, not just assuming what you want.

“The quality of AI outputs often depend on how clearly you describe what you want. It’s like the difference between asking someone to make dinner versus providing a detailed recipe with ingredients and cooking instructions.”

So like how I can’t just offload “please make dinner” to my husband and expect the task done, I have to also do the extra work of the mental preparation and planning for what needs to get done for that dinner? (Generally if I ask him to prepare dinner then he asks me to just order a pizza or fast food and he’ll pick it up.)

Also just more arguments that imply “if it’s not doing what you want it to, then YOU’RE using the software wrong.”

Figure 16: Process Description - The ability to guide the AI's thought process. "How" can be more important than "What". Providing training specific to your problem. Specific data, key tasks, preferred order, and so on.

Figure 17: Performance Description - The ability to define the behavioral aspects of an AI interaction. AI tools are interactive systems that can behave differently in different contexts. You need to explain how you want the AI to behave to get the best results.

“You might provide general guidance like a manual, step-by-step instructions like a cookbook, or even a demonstration through examples.”

I expect manuals to be detailed documentation, not general guidance?

If I’m writing out step-by-step instructions or doing examples, why don’t I just do the work myself, or delegate to another human?

Also have you SEEN how badly other people are at writing documentation and step-by-step processes?

“You need to explain how you want the AI to behave to get the best results.”

I don’t want to talk to my software. I want to talk to humans.

“Researchers are working to address current limitations through techniques like retrieval augmented generation which connects models to external knowledge and data sources as well as expanding their ability to use tools and improving their reasoning capabilities.”

What isn’t being stated here for the layperson is that “using tools” probably means spinning up a program (a traditional piece of software or script) to do some operation and then return the result. You could also run a program, do some operation, and check the result yourself.

6. Deep Dive 2: Effecting prompting techniques

- Effective prompting combines clear communication principles with AI-specific techniques

- Six foundational prompting techniques:

- Give context: Be specific about what you want, why you want it, and relevant background

- Show examples: Demonstrate the output style or format you're looking for

- Specify constraints: Clearly define format, length, and other output requirements

- Break complex tasks into steps: Guide the AI through multi-step reasoning

- Ask the AI to think first: Give space for the AI to work through its process

- Define the AI's role or tone: Specify how you want the AI to communicate

- The "secret weapon": Ask the AI itself to help improve your prompt

- Successful prompting is iterative (and perhaps also collaborative with the AI!). Expect to refine your approach based on results

- Common successful patterns include providing clear task overviews, format specifications, explicit constraints, and relevant background information

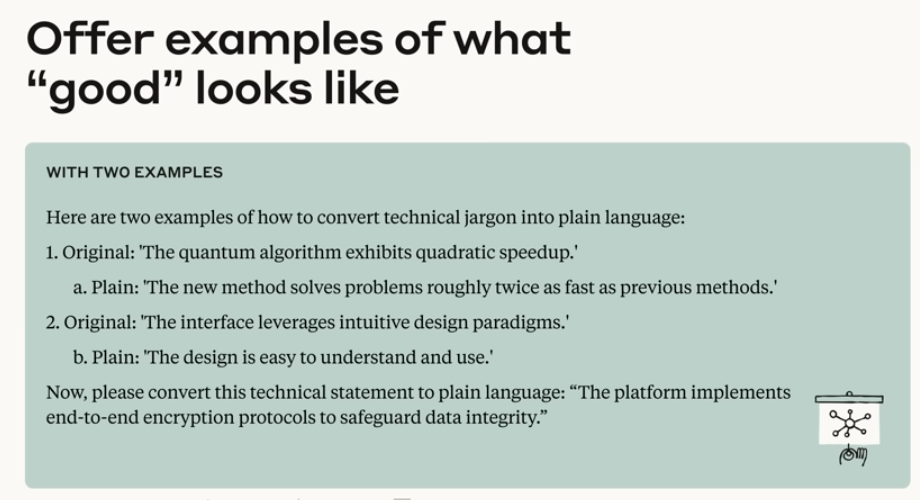

Figure 18: Offer examples of what "good" looks like. With two examples. Here are two examples of how to convert technical jargon into plain language: 1. Original: The quantum algorithm exhibits quadratic speedup. A. Plain: The new method solves problems roughly twice as fast as previous methods. 2. Original: The interface leverages intuitive design paradigms. B. Plain: The deisgn is easy to understand and use. Now, please convert this technical statement to plain language: "The platform implements end-to-end encryption protocols to safeguard data integrity."

Offering AI examples of what good looks like…

Original: "The quantum algorithm exhibits quadratic speedup."

Plain: "The new method solves problems roughly twice as fast as previous methods."

QUADRATIC IS NOT 2x!

WHAT?!

This is an example of tasks you give to the AI, and example outputs you want out of it.

Here, the example output is… Hey, let's misrepresent the original information. Math? Who cares!

"Ask the AI to think first"

I love telling my software to “work right” before giving it a task to do.

7. Discernment

Key takeaways

- Discernment is your ability to thoughtfully evaluate what AI produces, how it produces it, and how it behaves

- Product Discernment focuses on evaluating the quality of actual outputs (accuracy, appropriateness, coherence, relevance)

- Process Discernment involves assessing how the AI arrived at its output, looking for logical errors, attention gaps, or inappropriate reasoning

- Performance Discernment evaluates how the AI behaves within the collaboration process itself, considering whether its communication style is effective for your needs

- Discernment works hand-in-hand with Description in a continuous feedback loop

- Even the most advanced AI systems benefit from human judgment and oversight

Figure 19: Discernment - Your ability to critically evaluate what AI produces, how it produces it and how it behaves. You need: Domain expertise. An understanding of how AI systems work and their typical shortcomings.

Figure 20: Product Discernment - Your capacity to judge the quality of the AI output. Factually accurate? Appropriate to audience and purpose? Coherent and well-structured? DOes it meet my requirements? Does it add value?

But yes, ask AI to explain quantum mechanics to you.

Figure 21: Process Discernment - Your capacity to judge the quality of the problem solving approach. Logical inconsistency. Lapses in attention. Inappropriate steps. Getting stuck on one small detail. Getting trapped in circular reasoning.

Figure 22: Performance Discernment - Your capacity to judge AI behaviors. Is the communication style appropriate? Is the information at the right level? Is the response to feedback appropriate? Is the interaction efficient?

Figure 23: Feedback and correction - Effective feedback includes: Specifying the problem. Clearly explaining why it is a problem. Providing concrete suggestions for improvement. Revising your instructions or examples.

8. Diligence

Key takeaways

- Diligence is about taking responsibility for our AI collaborations

- Creation Diligence involves being thoughtful about which AI systems we use and how we engage with them

- Transparency Diligence means being honest about AI's role in our work with everyone who needs to know

- Deployment Diligence requires taking responsibility for verifying and vouching for the outputs we use or share

- Different contexts (personal, academic, professional) may have different expectations for disclosure and verification

- Thoughtful Diligence helps ensure our AI collaborations are not only effective and efficient, but also ethical and safe

Figure 24: Diligence. Taking responsibility. Rigorous, transparent, and accountable. Consider broader questions. Responsibility starts with awareness.

Figure 25: Creation Diligence - Your ability to be critical and intentional about which AI systems you choose to use and how you use them. Be critically aware of: The AI systems that we use. How we work with them. The impacts that come from that interaction.

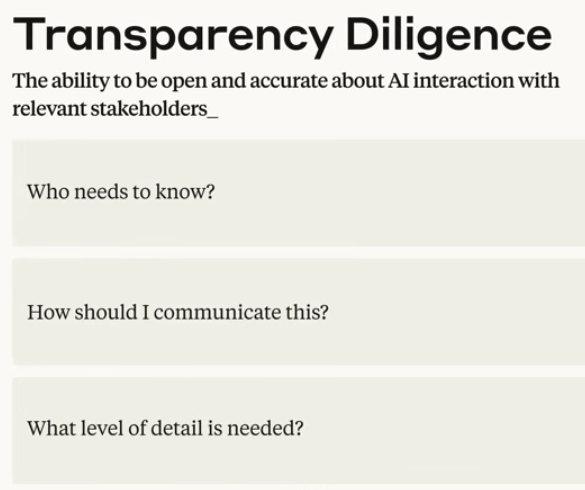

Figure 26: Transparency Diligence - The ability to be open and accurate about AI interaction with relevant stakeholders. Who needs to know? How should I communicate this? What level of detail is needed?

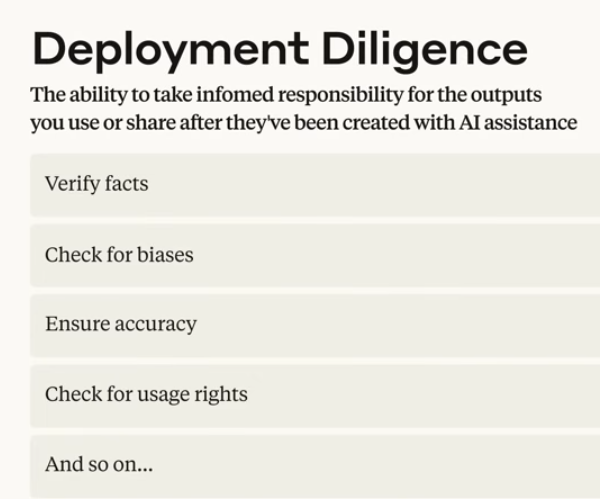

Figure 27: Deployment Diligency - The ability to take informed responsibility for the outputs you use or share after they've been created with AI assistance. Verify facts. Check for biases. Ensure accuracy. Check for usage rights. And so on…

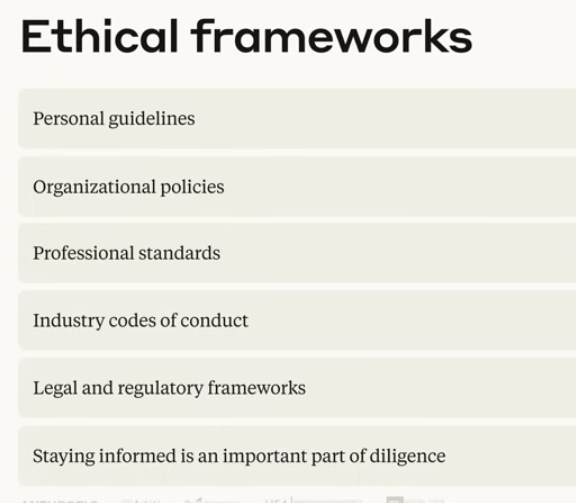

Figure 28: Ethical frameworks. Personal guidelines. Organizatinoal policies. Professional standards. Industry codes of conduct. Legal and regulatory frameworks. Staying informed is an important part of diligence.

9. Conclusion & certificate

Key takeaways

- AI Fluency develops through intentional practice of the four core competencies

- Delegation emphasizes that our expertise and judgment remain the foundation of effective AI collaboration

- Description involves clear communication that bridges our intentions and AI capabilities

- Discernment requires thoughtful and critical evaluation of outputs to work within the systems constraints

- Diligence ensures accountability, transparency, and responsibility in our AI work

- The most powerful outcomes emerge when humans and AI build on each other's strengths

- The framework is designed to remain relevant as AI systems continue to evolve

10. Addendum: Things Copilot suggested to me while I edited this document

models can shift between different tasks ticeable.

countless fields from microbiologyds of.

needing additional tional.

crunching tons of human language nguage.

fantastic emergentreast.

AI can sometimes be like letely.

LLMs are somewhat unpredictablee and.

to think uch as.

having a brilliant colleague mputer.

ability to help will be limited no matter how smart they are" uter.

improving their reasoning capabilities pabilities.

software or script to tware.

AI systems as often as you can and develop your own stems.

Fully automating? tomated.

Created: blog/images/2026-04-20 Mon 10:13